Machine learning (ML) and AI are transforming the way we interact with data and life around us. From a consumer’s perspective, what excites me the most about ML and AI is that they have the potential of enabling technology to break free from the small confines of machines. Computer technologies, as we use them now, are extremely rigid. They expect input in a specific format. They act in a predefined way. (Computers are still dull.) They are not integrated into our lives and in the world around us. ML and AI are the key for technology to make the transition to *live* with us. This is because AI and ML would enable creating technologies that can interact with unpredictable environments, and continuously adapt itself to changes.

“what excites me the most about ML and AI is that they have the potential of enabling technology to break free from the small confines of machines.”

ML and AI have been extremely successful in many areas ranging from data analytics and getting insights from large amounts of data to huge leaps in image and audio processing. However, I look at these advances as just being part of the first wave of the impact of ML and AI. What I, and many others, predict is that ML and AI will be integrated into every aspect of our lives, enabling computing and technology to fulfill the prophecies of early technologists and science fiction writers. Sometime in the near future, we will transition to the (second wave) of ML and AI where everything around us is continuously analyzing and acting upon the environment around it.

“we will transition to the (second wave) of ML and AI where everything around us is continuously analyzing and acting upon the environment around it.”

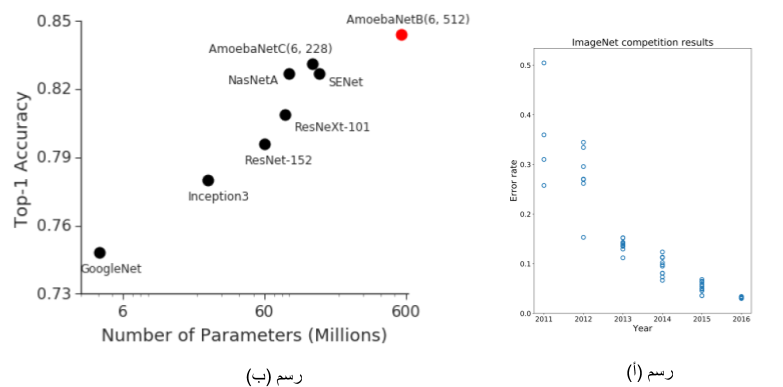

A natural question now is what is stopping ML and AI from this transition to the (second wave) enabling technology to make the leap from dull boxed machines to the world at large? One way to answer this question is to look at the success of the first wave of ML and AI, and project the pattern of that success to our predicted (second wave). The first wave of ML and AI is the result of research and theoretical foundations that dates back many decades ago. However, because the ML and AI foundations have existed for many decades prior to their overwhelming impact in the last decade, there must be another factor that contributed to the materialization of their impact. This factor is efficient data processing technologies. In particular, Big Data technologies enabled the management of large amounts of data, and thus it was possible to apply ML and AI technologies to huge amounts of data and build models that were not feasible before.

“because the ML and AI foundation has existed for many decades prior to their overwhelming impact in the last decade, there must be another factor that contributed to the materialization of their impact. This factor is efficient data processing technologies.”

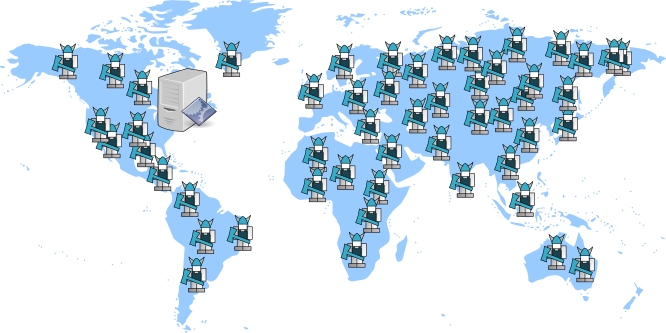

We are now experiencing the same pattern of the first wave of ML and AI, but this time for the (second wave). The ML and AI foundations are more than sufficient to handle many of the immediate real applications of the (second wave). However, they remain largely unrealized due to the practical challenges of deploying them. Similar to the first wave, these practical challenges are mostly related to building an efficient data infrastructure that could process and analyze data using ML and AI technologies at the scale and time requirements of emerging applications in the (second wave).

So, what are these practical challenges that are stopping the realization of the (second wave) of ML and AI? Each application might have some unique challenges associated with it. However, there are common characteristics among many of the emerging applications of ML and AI. These characteristics are related to the nature of these applications being more personal and more integrated with a “real” environment. This leads to the following common features:

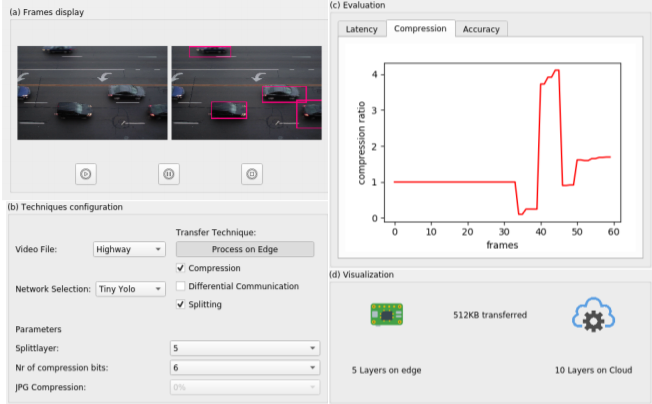

(1) The production and consumption of data are immediate, thus requiring real-time processing and a fast feedback loop. This has the side effect that the processing and analysis of data must happen close to where data is produced and consumed.

(2) The environment continuously changes and there is a need to adapt to these changes in real-time. This means that the learning process is itself continuous and must be done in infrastructure that is close to the data sources and sinks.

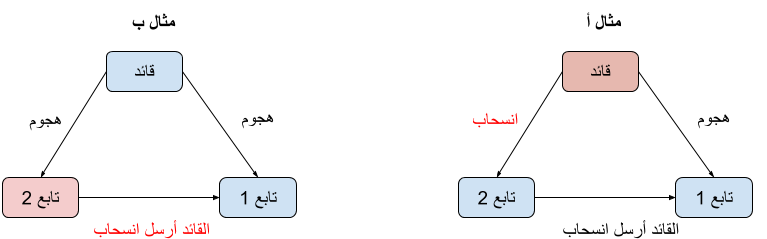

(3) The ML and AI applications can no longer have a narrow view of its surroundings. Instead, ML and AI applications must interact with each other. These interactions can take various forms, ranging from two models/applications cooperating with each other to models/applications having a hierarchical relationship where some are controlling others.

These three features cannot be supported by the current data processing technology and infrastructure. Luckily, the foundation to support these features have been laid down by the mobility, edge, and networking communities that have investigated issues of real-time cooperation and processing in edge and mobile environments close to data sources and sinks. However, what is missing is the last step in realizing the data infrastructure for the (second wave) of ML and AI that requires evolving data processing and integrating it with both mobile edge and ML/AI technologies.

“What is missing is the last step in realizing the data infrastructure for the (second wave) of ML and AI that requires evolving data processing and integrating it with both mobile edge and ML/AI technologies.”

I am excited by the research and practical problems that need to be solved to evolve data processing to support the (second wave) of ML and AI. What is interesting is that they touch many aspects of data management and potentially transform them. Here are some of these aspects:

(1) What are the right data access and management abstractions that we need to provide to developers? The current way of providing a one-dimensional view of data access where we only care about the name (key) of data and its value. Data access abstractions must factor in other aspects, such as the richer type of processing that involves learning, inference, and processing all in the same scope. Also, the data access abstraction must factor in the inaccuracy and evolving nature of models, where a result that is correct now might not be correct later. And finally, the programming abstraction must factor in real-time properties and provide clear control on what needs to be done immediately, and what can be delayed, and how these two classes interact.

(2) What are the right distributed coordination and learning protocols? Currently, coordination protocols are built for a cloud environment where the participants are smaller in number and relatively more reliable. To perform processing and learning in real-time, there is a need to use a less-reliable infrastructure that is closer to users, and the integration and interaction between applications make the coordination be at a larger scale both in terms of the number of nodes and the distances between them. These factors together invite the investigation of robust protocols that could run in such environments and be resilient to the unpredictable and dynamic nature of edge environments.

(3) What are the right security and privacy mechanisms for (second wave) applications? One of the implications of the scale and need for processing and learning to be done at close proximity to users makes it likely that the compute infrastructure for one application would be owned and/or operated by multiple entities. What adds to this is that multiple applications from multiple control domains might need to coordinate with each other to provide the integration needed in (second wave) applications. To enable running across multiple control domains and entities that might not be mutually trustful, there is a need for security and privacy mechanisms. This is a challenging problem as these methods cannot have a significant overhead that interferes with the real-time requirements and efficient processing at the edge.

Solving these challenges is attainable in the near future, hopefully enabling the (second wave) of ML and AI applications. However, unlike many traditional problems in systems, tackling these problems requires taking a global view of the problem that is at the intersection of many areas. How all this is going to come out is difficult to predict — and I cannot wait to see it!